OEM suspension programs often look rock-solid during development: the prototype lands on the target damping curve, the dyno plot is clean, and early ride reports feel reassuring.

Then SOP happens—the moment the line has to repeat that result at volume—and the same program can start bleeding time and credibility: inconsistent damping feel from batch to batch, early leakage complaints, uneven warranty signals across regions, and constant sorting at the receiving dock.

It’s not hard to build a great prototype. It’s hard to keep production centered and stable week after week.

Why OEM suspension programs look successful in development but fail after SOP

This is the core pattern behind motorcycle suspension OEM program failure after SOP: the design may be validated, but the manufacturing system isn’t proven to hold CTQs batch after batch.

Prototype success is not production readiness

Hand-built samples are usually built by your best people under your best conditions.

Parts are often hand-selected.

Processes are slower and more controllable.

Engineering attention is continuous.

Tooling is new, and fixtures are not worn.

That’s why prototype units can be excellent while the production system is still immature.

Prototype ≠ scalable system.

SOP introduces real-world variation that prototypes never test

Once the line runs for volume, you expose variables that were muted or absent during development:

operator-to-operator differences across shifts

material lot variation as procurement expands

fixture wear and tooling drift over time

line-speed pressure that changes how assemblies are executed

A good reference point is how manufacturing teams distinguish validation stages: early validation proves the design intent; production validation proves the system can repeat output at scale (see Formlabs’ overview of EVT/DVT/PVT and mass production validation). In other words: prototype vs production validation suspension logic is about proving the system, not the hero sample.

Production environment changes everything.

The real failure point is consistency, not performance

A single dyno curve can be “right” while the batch is unstable.

One unit can be tuned to perfection.

A batch tells you whether the process is centered and controlled.

Field quality is the result of distribution, not the best sample.

Stability > peak performance.

The core issue is system variability, not suspension design

Supply chain fragmentation creates hidden inconsistency

In emerging markets, “the supply chain” often isn’t one chain. It’s a multi-tier network with uneven standards.

Common instability drivers include:

multi-tier outsourcing (Tier 2 and Tier 3 changes you can’t see)

non-uniform sourcing for the same part number

inconsistent upstream process controls and acceptance logic

Even when drawings don’t change, lot-to-lot variation is a real, standard source of variation in production systems. Accendo Reliability explicitly lists lot-to-lot variation as a distinct category because inputs are not identical across batches.

Variation starts before assembly.

Cost pressure reduces process discipline

Emerging-market programs typically have aggressive cost targets and frequent price-down cycles. When cost becomes the dominant KPI, process discipline degrades in predictable ways:

inspection shortcuts under pricing pressure

reduced process control to increase output

efficiency prioritized over stability

Cost optimization often creates quality instability.

Production knowledge is not aligned with engineering intent

Engineering capability concentrates in the prototype phase.

In production, the work shifts to a different reality:

the assembly sequence is optimized for throughput

controls are simplified to keep the line moving

decisions are made under schedule pressure

Design intent ≠ manufacturing execution.

Why suspension problems amplify during scaling

Small process deviations become large field issues

Suspension is sensitivity-driven. Small process shifts can create big differences in feel and durability.

Examples:

minor changes in damping force at key velocities can show up as instability or harshness

small sealing variation can become leakage, then oil loss, then damping fade

This is the same basic mechanism that makes suspension tunable in the first place: hydraulic damping depends on flow restrictions and valve behavior (UTI’s overview of motorcycle suspension systems explains the hydraulic damping principle and shim-based cartridges at a high level).

Suspension is highly sensitivity-driven.

Batch-to-batch variation drives most real failures

A typical failure arc in OEM programs:

first batch acceptable

later batches drift as tooling wears, suppliers change, and throughput increases

field complaints arrive after scaling

That’s why batch-to-batch variation shock absorber behavior is the signal to watch. It tells you whether your approved sample represents a controlled distribution or a one-off win.

SOP reveals hidden variability.

Scale exposes weak process control

If your output depends on who built the unit, you don’t have a process—you have a dependency.

Scaling requires controls that survive shift changes, tool wear, and line-speed pressure.

Why “good samples” do not guarantee OEM success

Suppliers optimize for sample approval, not production stability

Prototype builds often receive extra attention:

tighter informal inspection

slower, careful assembly

engineering “touches” that don’t exist at line speed

Samples are optimized artifacts, not system proof.

Sample approval hides real manufacturing risk

The same drawing can be executed in different ways.

different fixture strategy

different torque discipline

different fill/bleed execution

different measurement rigor

Approval ≠ capability.

Dyno results do not reflect production variation

Dyno testing is valuable, but it’s usually run under controlled conditions.

A dyno curve from one or two samples is not evidence of batch stability. The evidence you need is distribution: what does the curve look like across a lot, after changeovers, and after the line has been running for weeks.

Test results ≠ production stability.

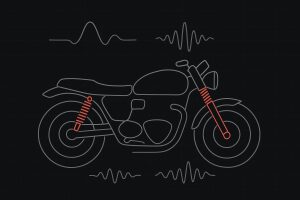

What actually defines a reliable suspension supplier

Consistency across batches is the real KPI

A mature supplier is defined by repeatability.

stable output across batches

stable mean and spread after changeovers

predictable performance in the field

Consistency is the core manufacturing value.

Controlled manufacturing behavior matters more than tuning ability

Tuning skill can make one unit great.

Production readiness depends on whether the supplier can:

reduce operator dependency

define CTQs (Critical-to-Quality characteristics) that link engineering intent to shop-floor control

run a control plan that keeps those CTQs stable

Process control > tuning skill.

Ability to explain variation is a maturity signal

When drift happens, mature suppliers can explain it.

They can trace variation to:

lot change

tooling or fixture wear

shift differences

measurement system issues

This is where MSA (Measurement System Analysis) matters. If you can’t trust the measurement, you can’t trust the conclusion. ASQ defines gage repeatability and reproducibility (GR&R) as the process used to evaluate a gauging instrument’s accuracy by ensuring measurements are repeatable and reproducible.

Transparency indicates system maturity.

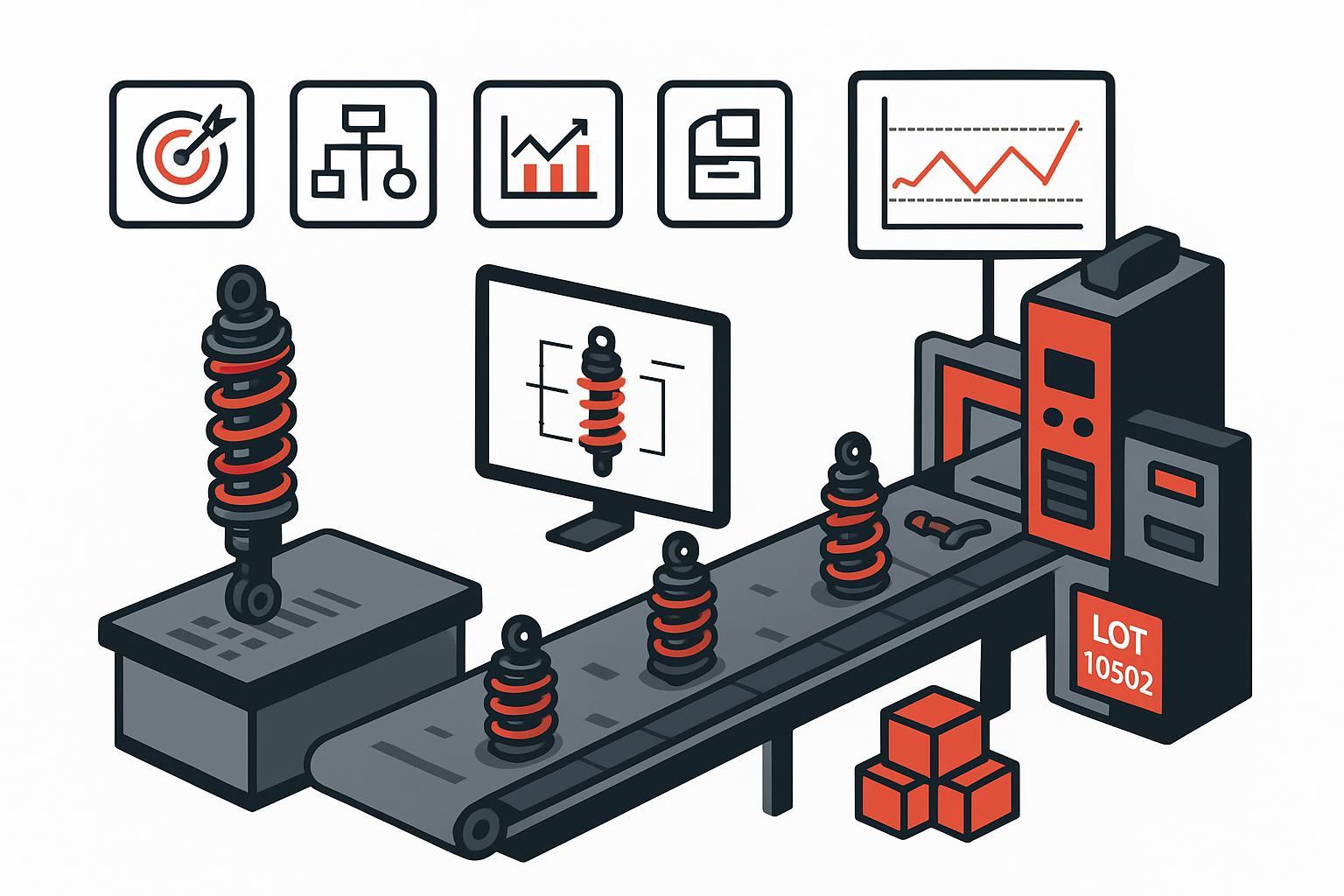

If you need the language buyers and suppliers can align on, APQP (Advanced Product Quality Planning) and PPAP (Production Part Approval Process) are designed to turn “we can build it” into documented evidence.

A practical APQP/PPAP-style supplier review boils down to four evidence blocks:

CTQs tied directly to performance and durability

a control plan that keeps those CTQs stable at line speed

MSA (including GR&R) for the measurements you’ll use to accept/reject parts

traceability that can connect field complaints back to lot/shift/process parameters

This isn’t paperwork. It’s how you catch drift before it becomes a warranty event.

Why emerging markets increase OEM suspension risk

Scaling speed exceeds process maturity

Demand can scale faster than the factory system matures.

Volume exposes problems that were irrelevant at low build rates: drift across shifts, tool wear effects, fixture degradation, and parameter stability.

A useful way to frame this is validation maintenance: MedTech Intelligence notes that scale-up often increases variability (tool wear, thermal drift, fixture degradation) and that SPC should be tied to validation maintenance, with signals like parameter drift across shifts and lot-to-lot material response.

Growth outpaces control systems.

Mixed supply ecosystems increase variability

Emerging-market ecosystems often mix imported and local components.

That can mean:

different supplier standards coexisting

uneven documentation quality

inconsistent traceability depth

Ecosystem complexity increases instability.

Weak feedback loops between field and factory

If field failures are not systematically analyzed, the factory keeps repeating them.

Multi-tier networks make this worse. Visibility problems and mismatched management systems are common obstacles in multi-tier supply chains; QIMAone summarizes several multi-tier supply chain visibility challenges that reduce the ability to detect upstream issues early.

No feedback = repeated failure.

How OEMs can reduce failure risk before scaling

If you want a suspension OEM supplier evaluation checklist that engineering, SQE, and procurement can actually use, start by separating “sample proof” from “process proof,” then demand repeatability evidence.

Separate prototype validation from production validation

Treat samples as design proof, not production proof.

A practical gating approach:

prototype validation: performance targets and functional durability

production validation: CTQs, measurement capability, process capability, and reaction plans

Two-stage thinking is required.

Evaluate suppliers based on repeatability, not samples

If you want to avoid “good samples, bad SOP,” ask for evidence that the supplier can repeat outcomes:

batch-to-batch dyno sampling rules and acceptance windows

a traceability model (batch, shift, key process parameters) that matches your warranty risk

Evidence > demonstration.

Build escalation and correction logic early

A stable program assumes drift will happen at some point. The question is whether the control system catches it early.

Define upfront:

what triggers containment (stop-ship conditions)

who owns corrective action

response-time expectations

what evidence is required to release production again

Control system > inspection system.

Decision framework: when motorcycle suspension OEM program failure after SOP risk is low enough to scale

Green zone (low risk)

stable batch output

repeatable dyno results across defined sampling rules

predictable field behavior

supplier can explain and control variation

Yellow zone (controlled risk)

sample success but limited batch evidence

partial visibility into CTQs and control plan

early signs of variation (shift-to-shift drift, lot sensitivity)

Red zone (high risk)

prototype-only validation

no batch stability proof

no clear explanation of process controls or drift mechanism

Where Kingham Tech fits in this evaluation

If your core risk is SOP instability, you’re not just selecting a shock absorber—you’re selecting a manufacturing system.

If you’re an OEM buyer, SQE, or distributor building a program in an emerging-market supply ecosystem, the fastest way to reduce post-SOP surprises is to qualify the supplier’s system before you qualify the product. In practice, that means three checks: CTQs tied to ride feel and durability, proven measurement capability, and traceability that’s strong enough to contain issues fast.

Kingham Tech works with partners who take that stability-first approach, with an OEM/ODM workflow designed to repeat results at scale.

If you want a quick sanity check, share your current supplier’s evidence pack (control plan summary, MSA/GR&R, dyno sampling rules, and traceability approach). We’ll highlight common gaps that cause drift after SOP and suggest what to tighten before you scale.

Learn more about our OEM/ODM workflow: Kingham Tech OEM/ODM partner